Networks done in Part 2; now we build the SAN. The iSCSI-Target VM gets the iSCSI Target Server role, the iSCSI service gets bound to the storage NIC ONLY, and we carve two LUNs — 100 GB SQL-Data and 2 GB Quorum-Witness — onto a single target so they present together to both cluster nodes. Initiator ACL by IP, CHAP off for the lab.

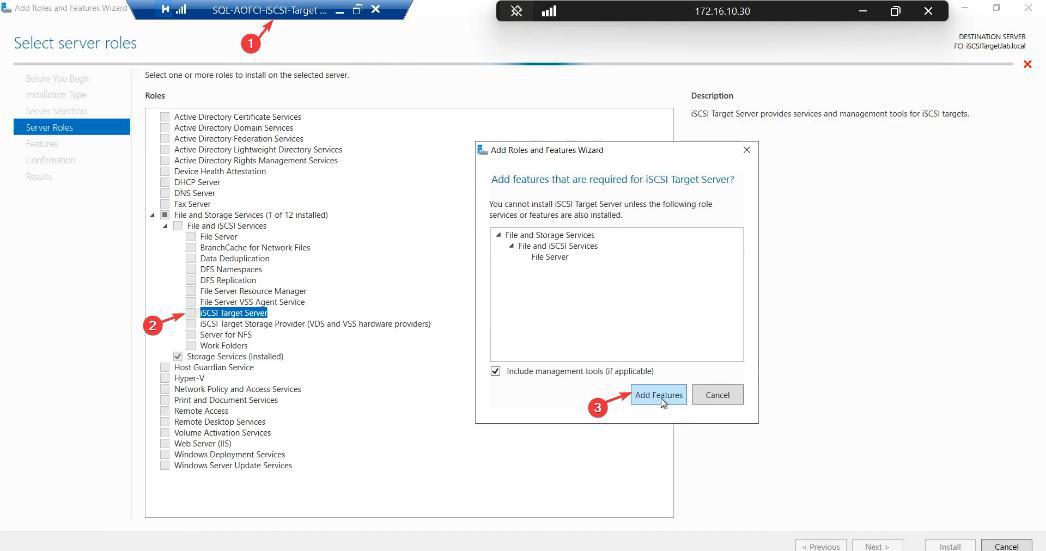

Step 1 — install the iSCSI Target Server role

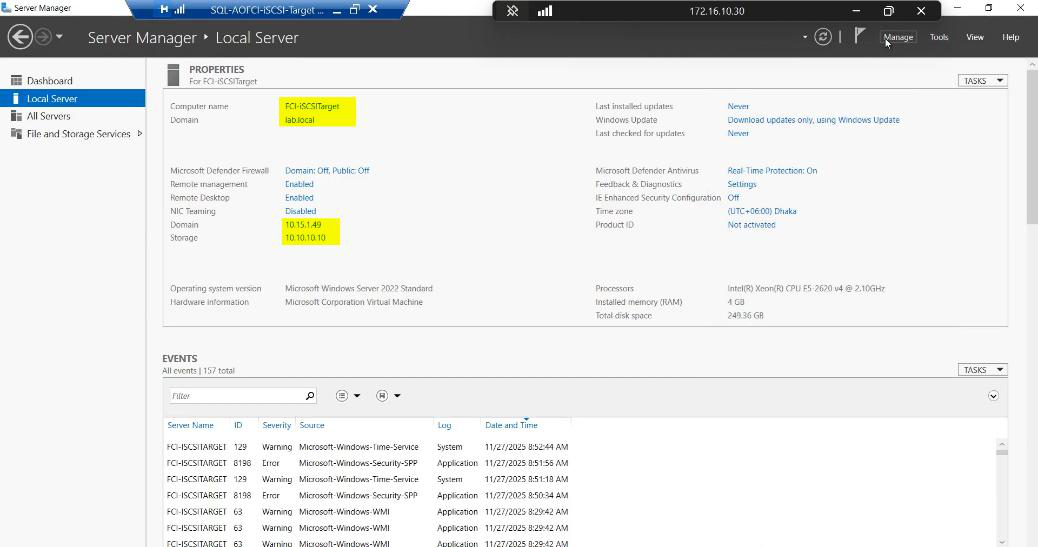

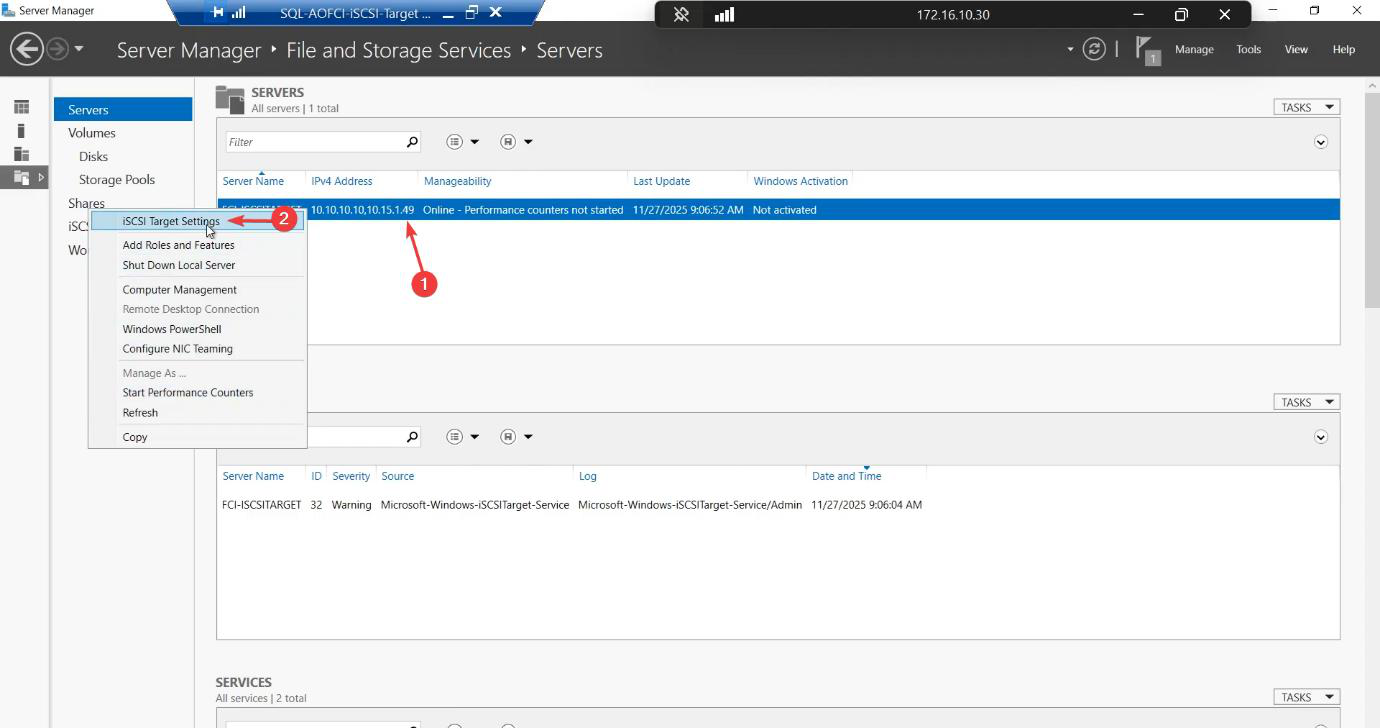

10.15.1.49 on the public LAN, 10.10.10.10 on the storage subnet.Sign in to the iSCSI-Target VM (the SAN emulator). Open Server Manager.

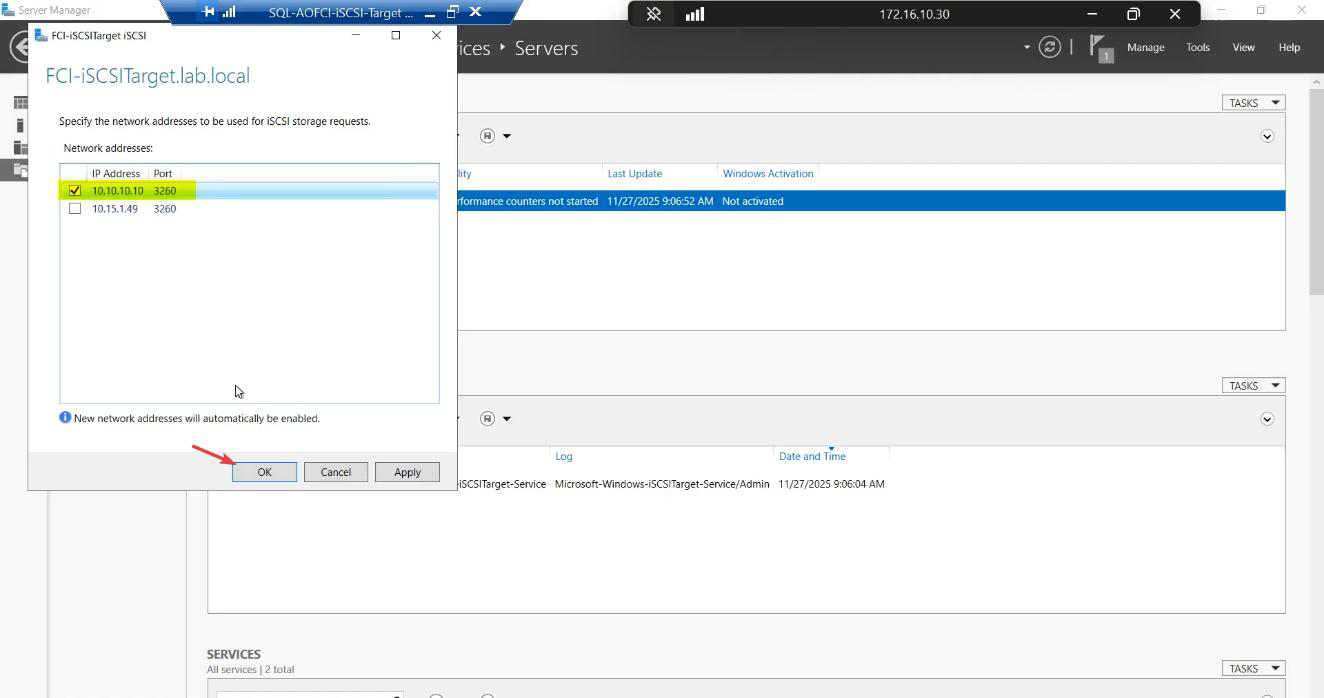

10.10.10.10. Untick 10.15.1.49. iSCSI must NOT answer on the public NIC — otherwise storage traffic and client traffic share a wire and both suffer.Tick the storage IP (10.10.10.10). Untick the public IP (10.15.1.49). OK.

Now iscsiadm connections from initiators only succeed on the storage subnet. Public NIC won’t respond to iSCSI handshakes — if a node accidentally tries to log in over the public NIC, it gets a clean refusal instead of working “by accident.”

Step 3 — create the SQL-Data LUN (100 GB)

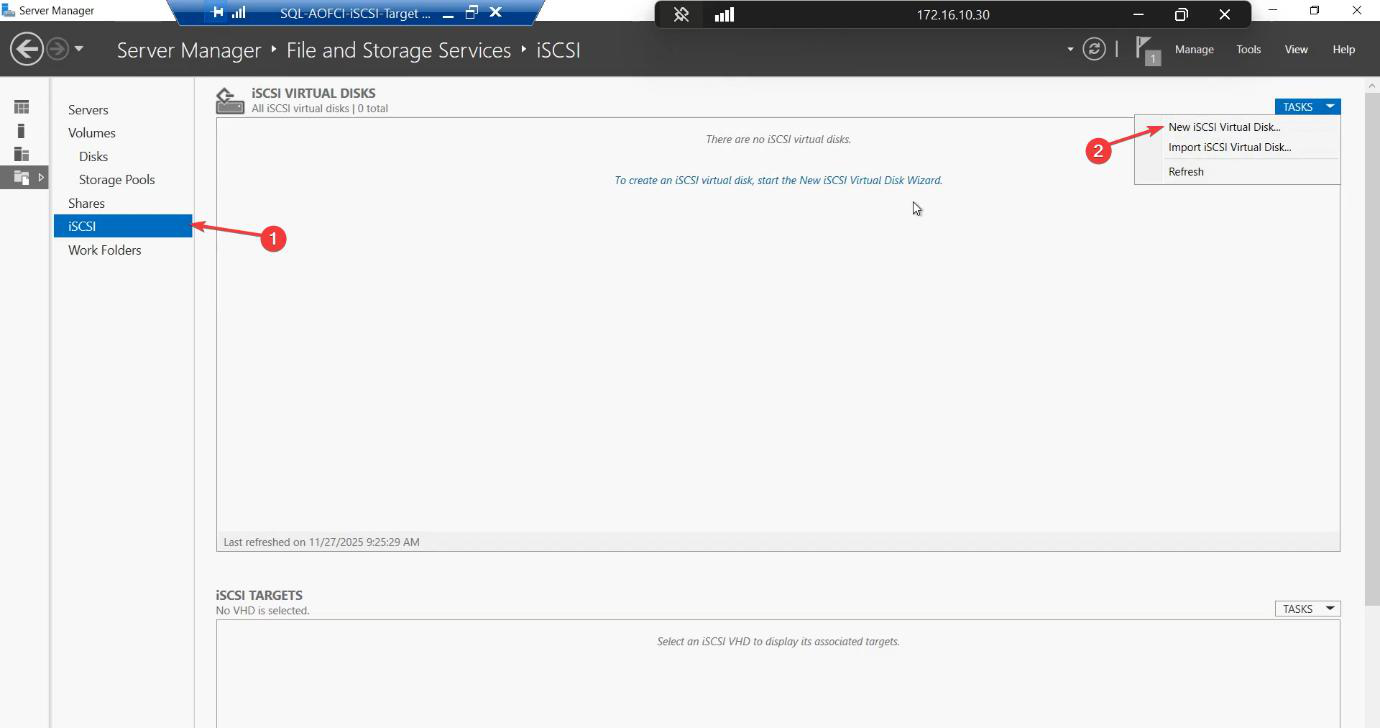

iSCSI section > Tasks > New iSCSI Virtual Disk.

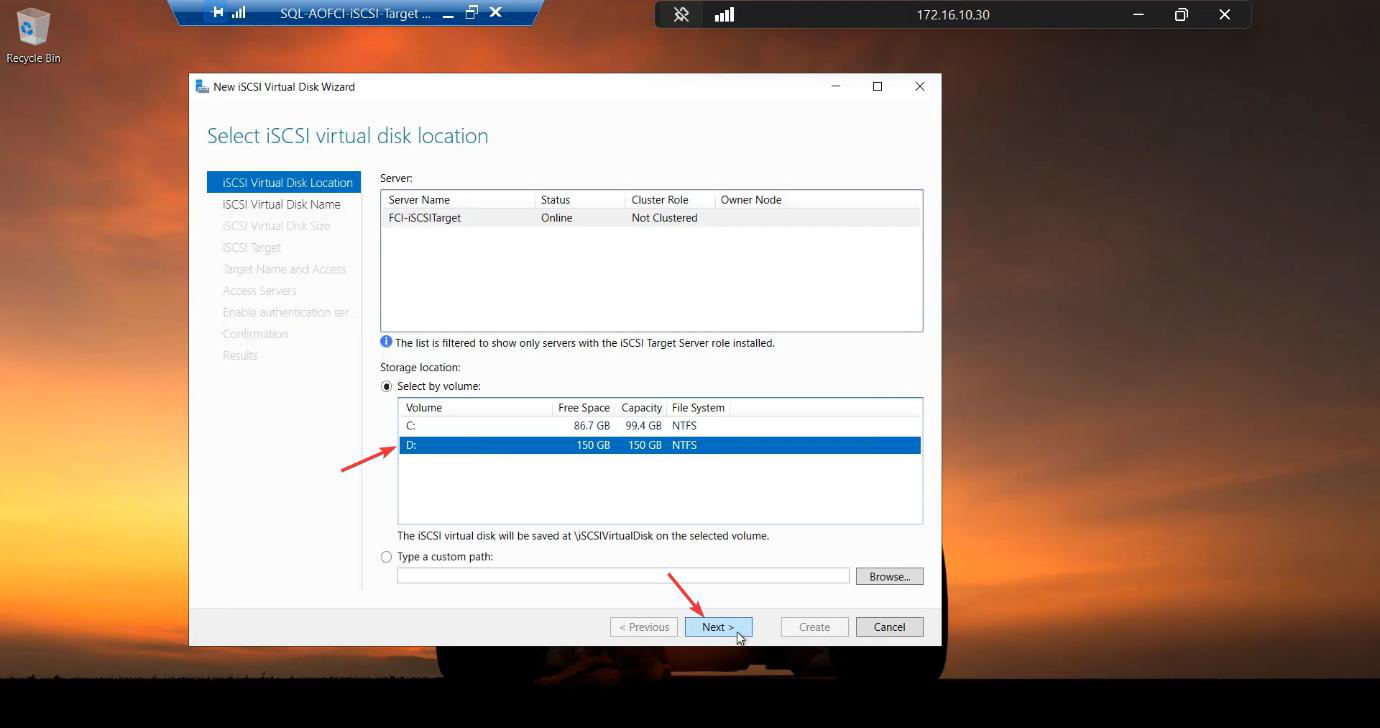

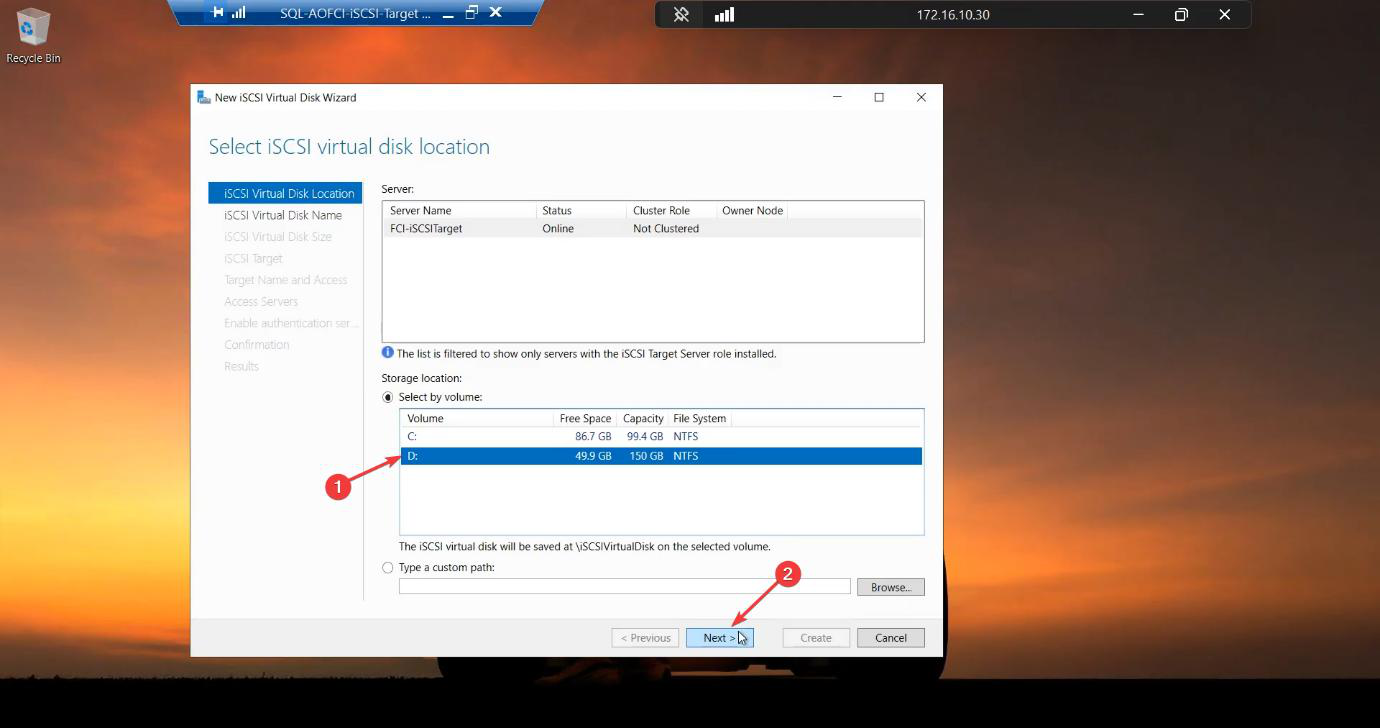

D:\iSCSIVirtualDisks folder if it doesn’t exist.Location: D: volume (the 150 GB raw disk).

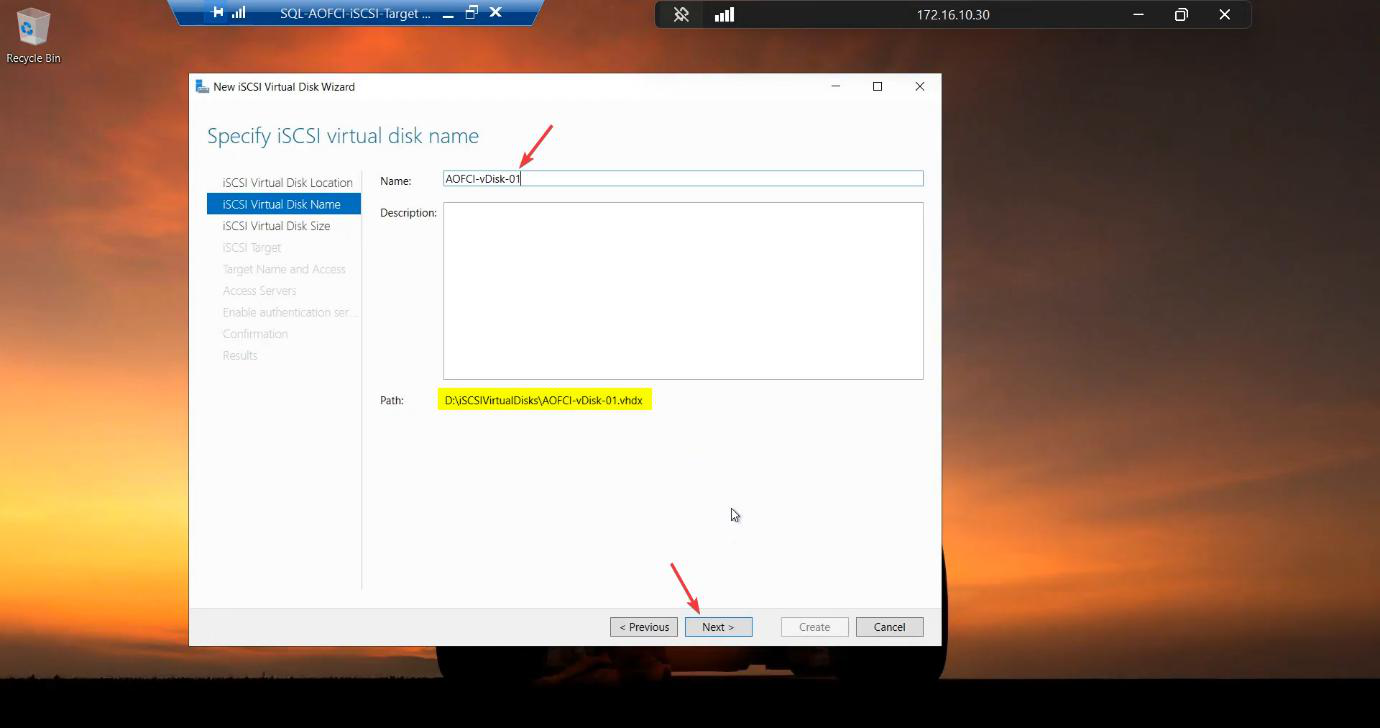

SQL-Data. Use a descriptive name — you’ll see it in cluster Disk Properties later.Name: SQL-Data.

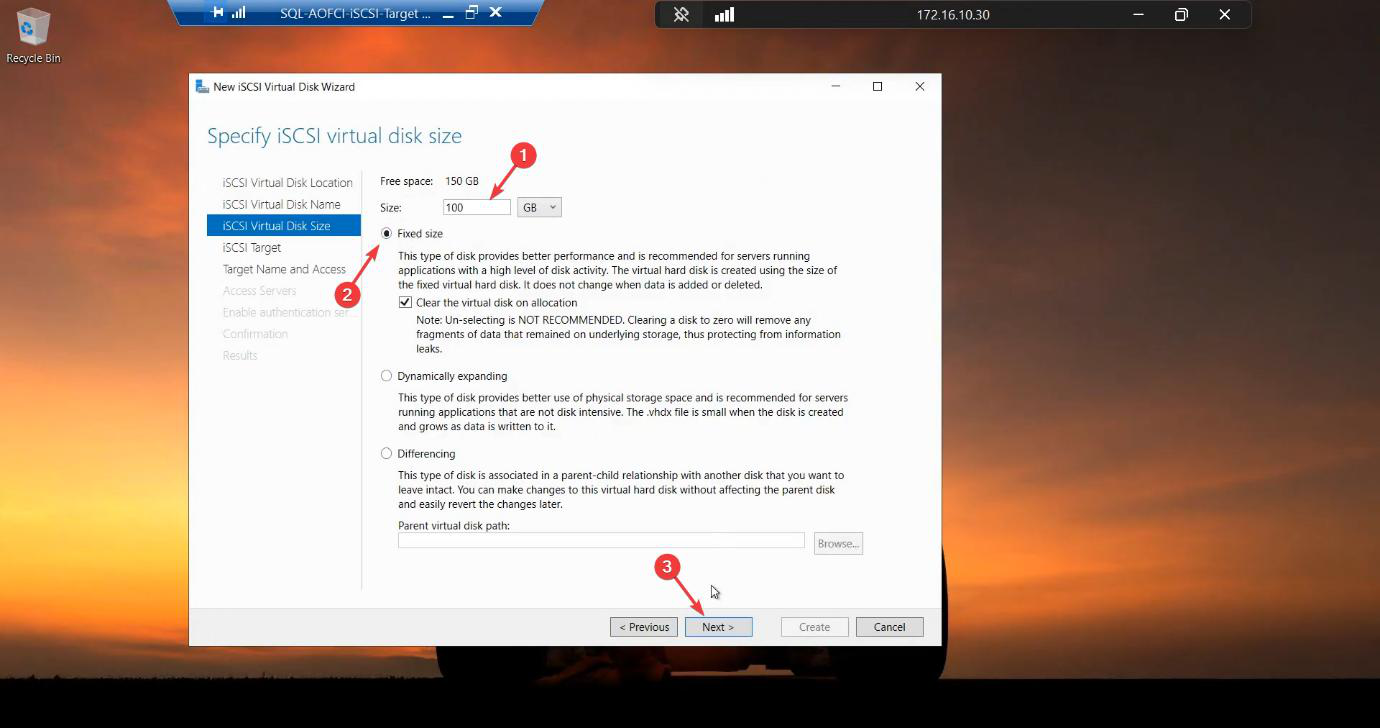

Size: 100 GB. Fixed Size. Fixed pre-allocates the entire file — takes minutes to create but no expand-pauses later. Never use dynamic for production SQL data files. Lab is fine either way.

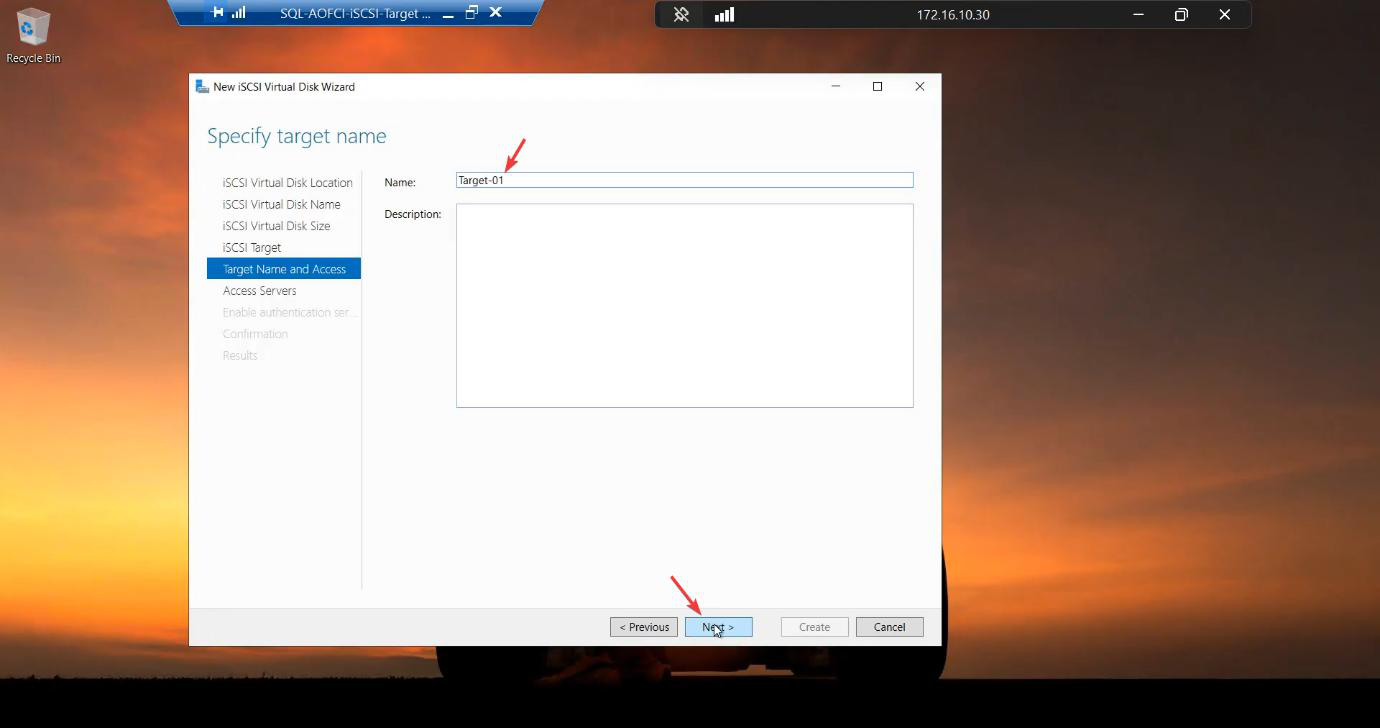

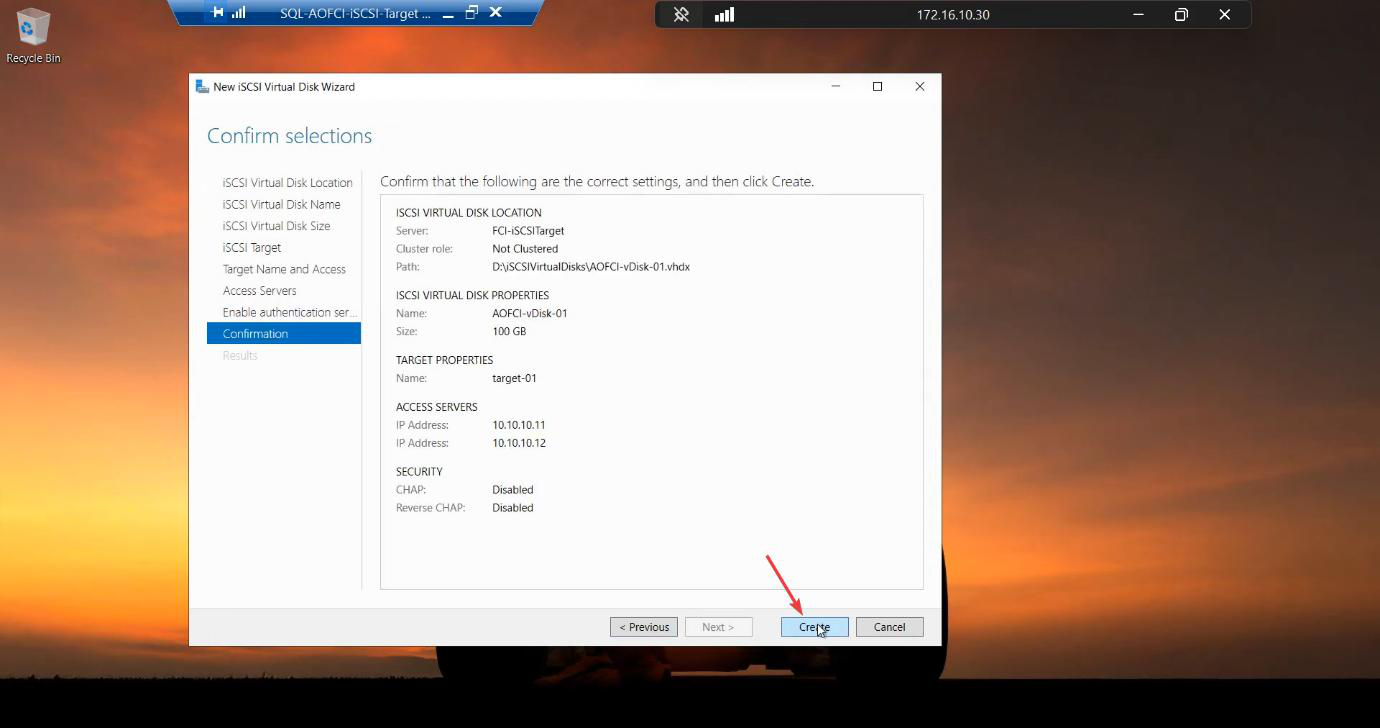

Target-01. A target is a logical group of LUNs presented together to the same initiators.Target: New iSCSI Target. Name: Target-01. A target is a logical group of LUNs presented together — both data and quorum will go on this same target.

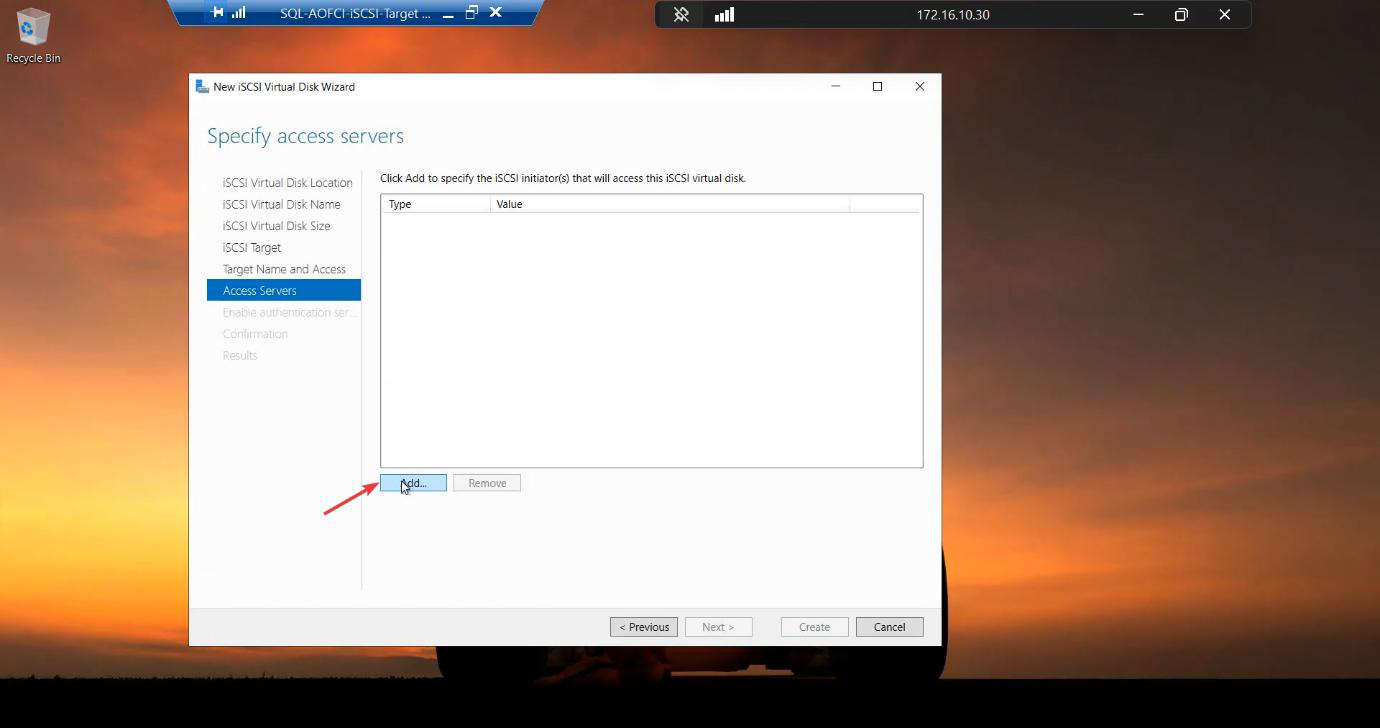

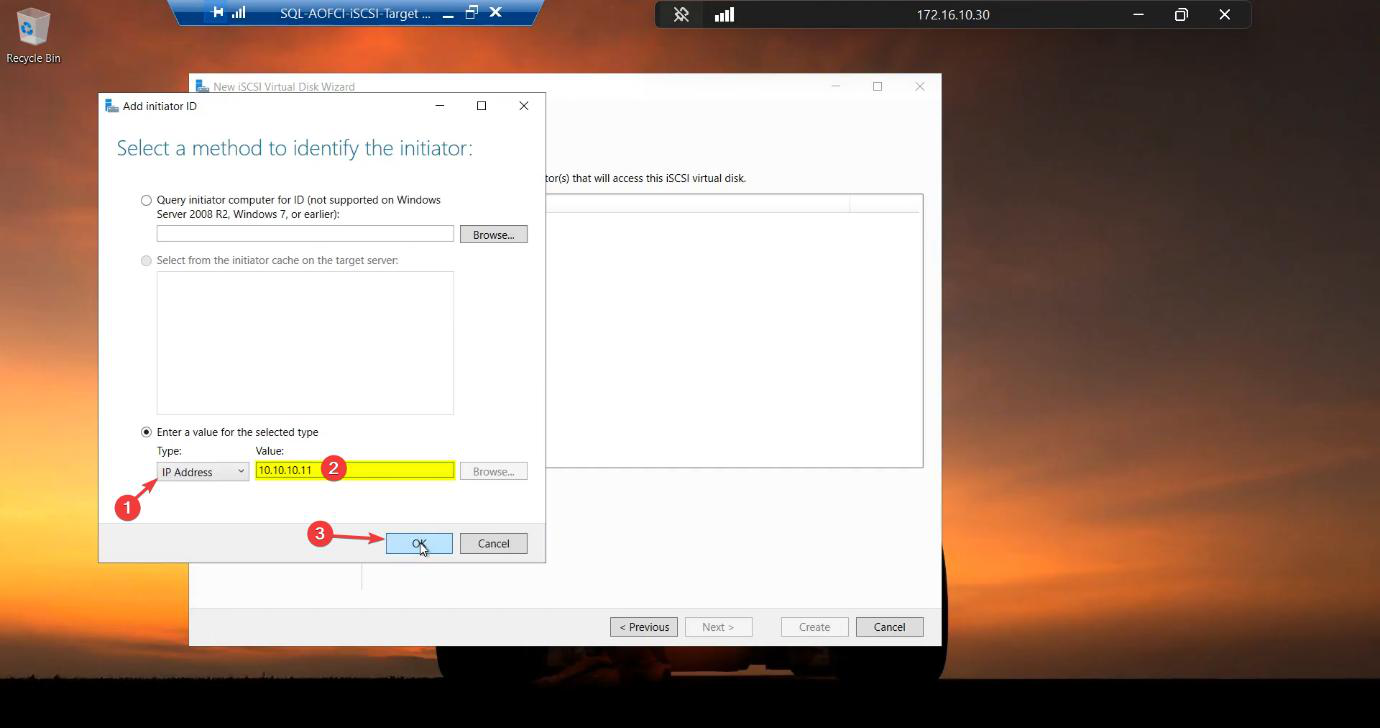

Add the initiator ACL

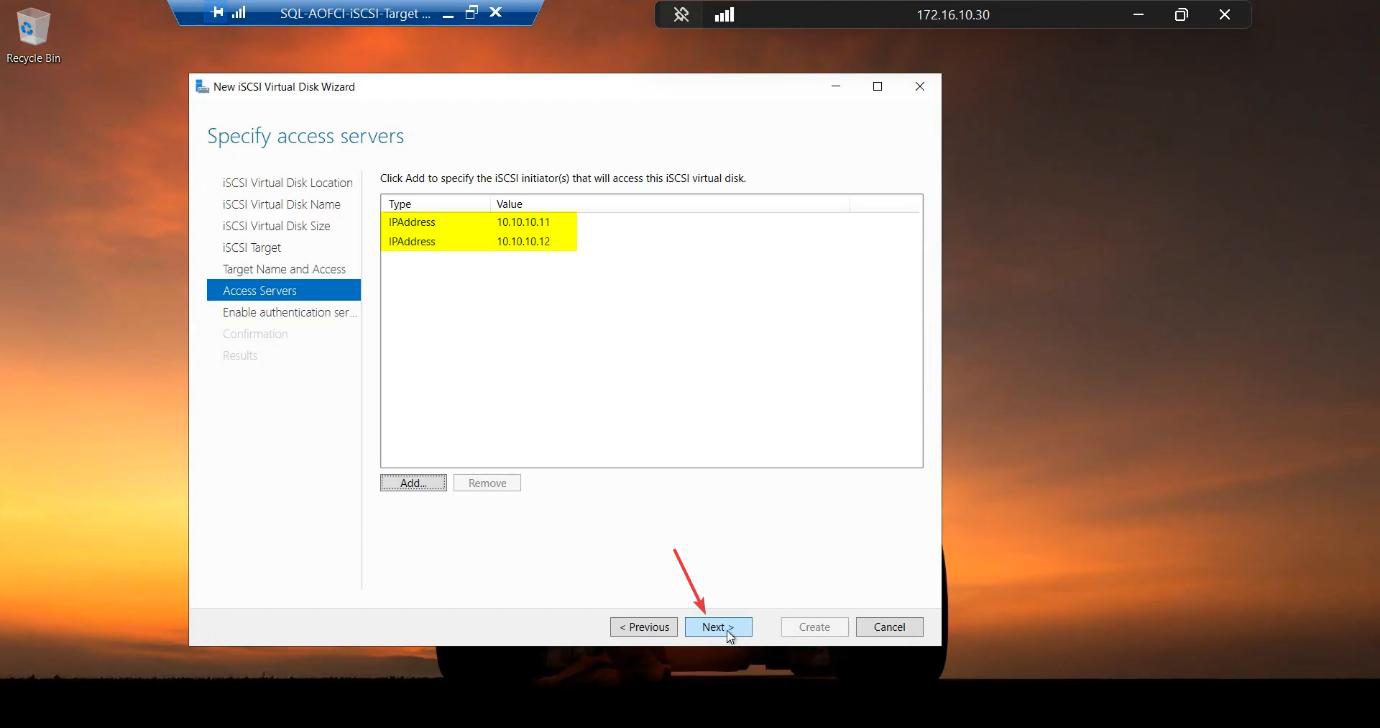

10.10.10.11 — Node-01’s storage IP. This whitelists Node-01.Access Servers > Add. Type: IP Address. Value: 10.10.10.11 (Node-01 storage IP).

10.10.10.12 for Node-02. Both nodes can now mount this target.Repeat with 10.10.10.12 for Node-02. Both nodes are now in the ACL.

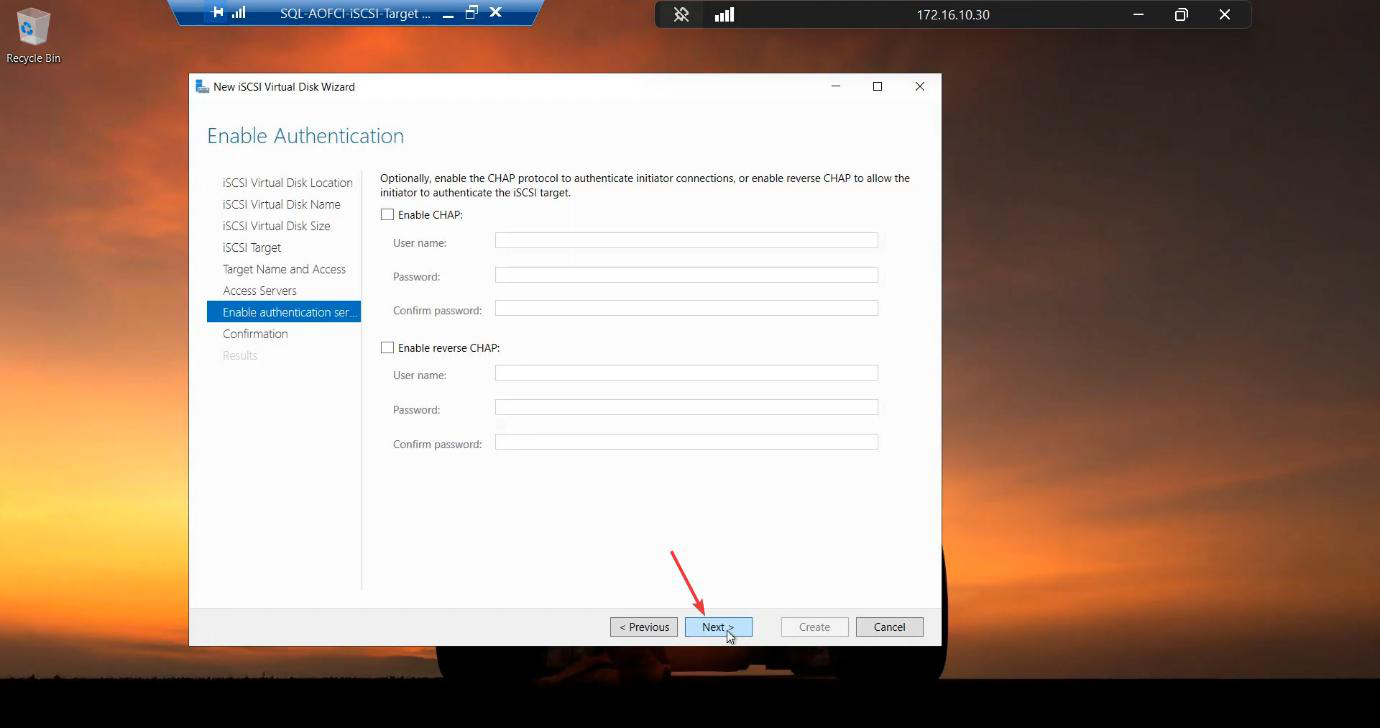

CHAP authentication: disabled for the lab. Production: enable CHAP with mutual auth, OR scope by IQN (more durable across IP renumbering).

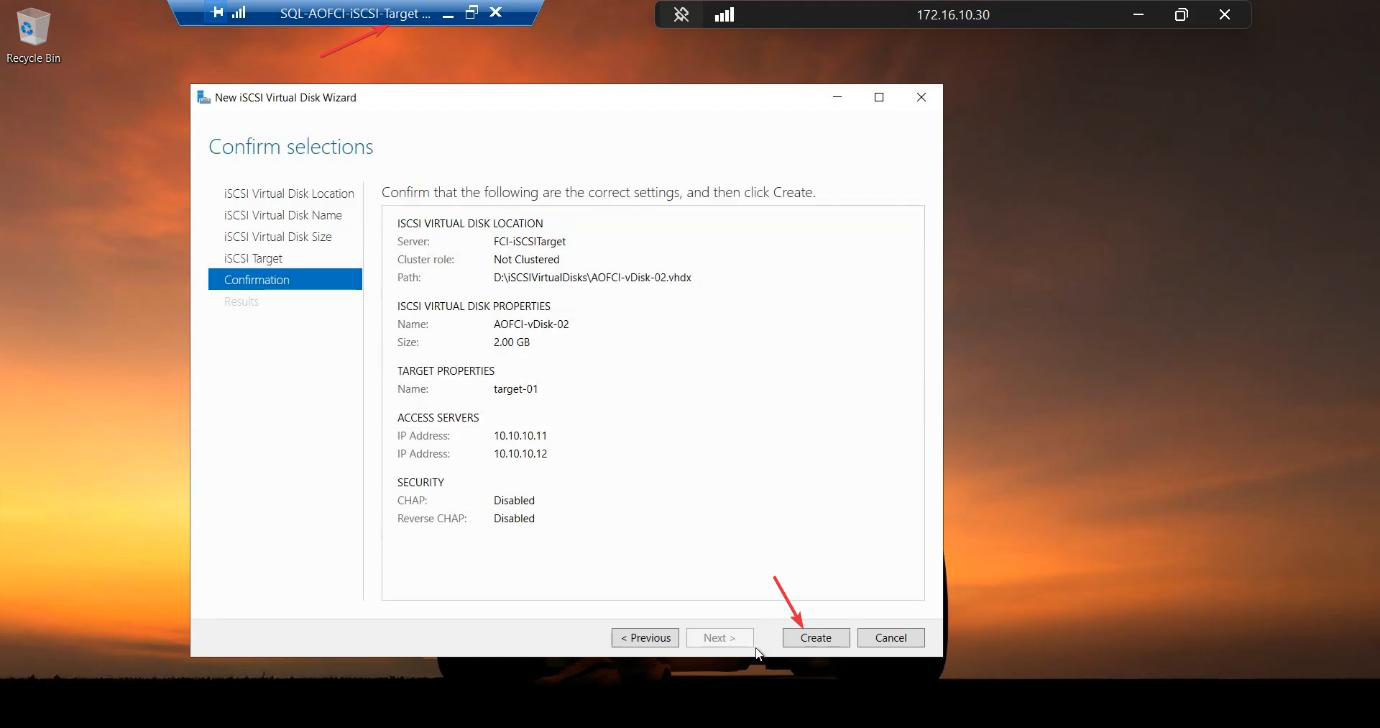

Review.

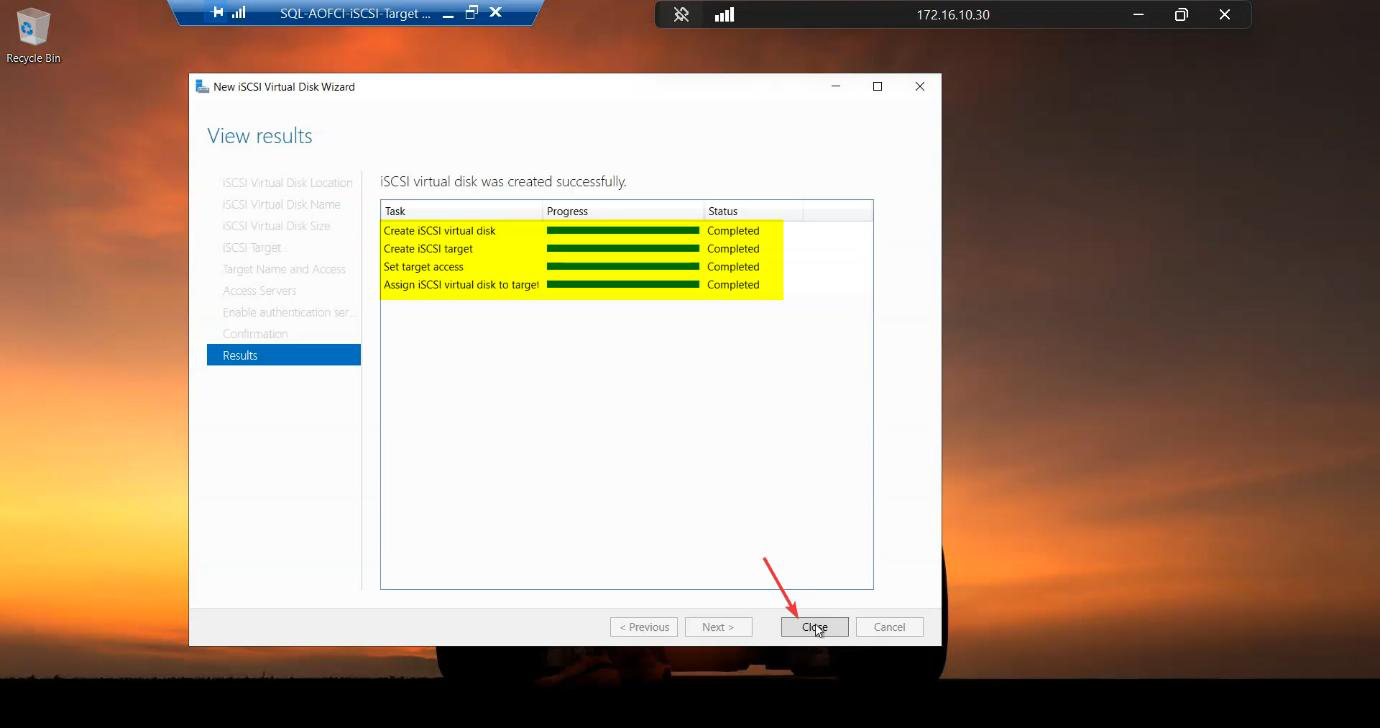

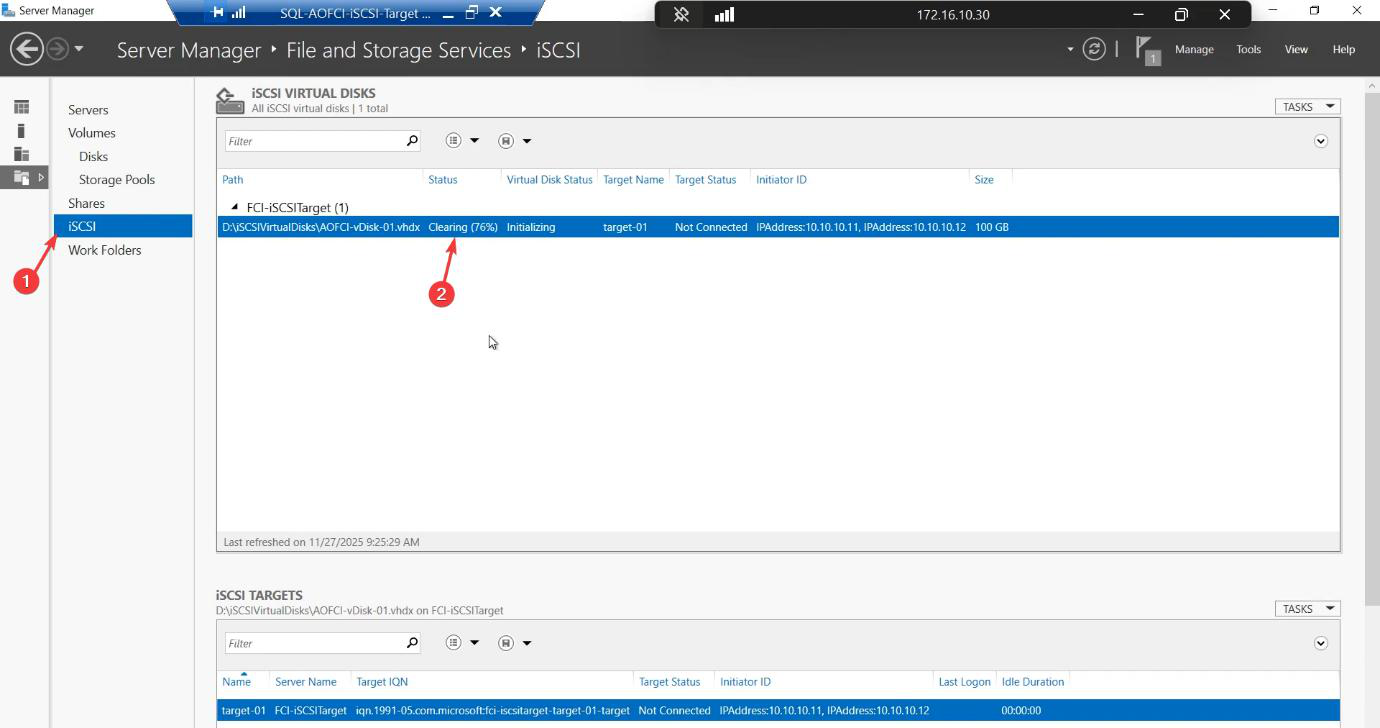

Created. SQL-Data.vhdx now lives on D:. Status Not Connected until initiators log in (Part 4).

Step 4 — create the Quorum-Witness LUN (2 GB)

The Quorum is the cluster’s tie-breaker. In a 2-node cluster, if heartbeat fails between nodes but both can still reach storage, BOTH nodes might try to take ownership of the disks — the dreaded split-brain. The Quorum (witness) is a third “vote” that prevents this.

New iSCSI Virtual Disk > D: volume.

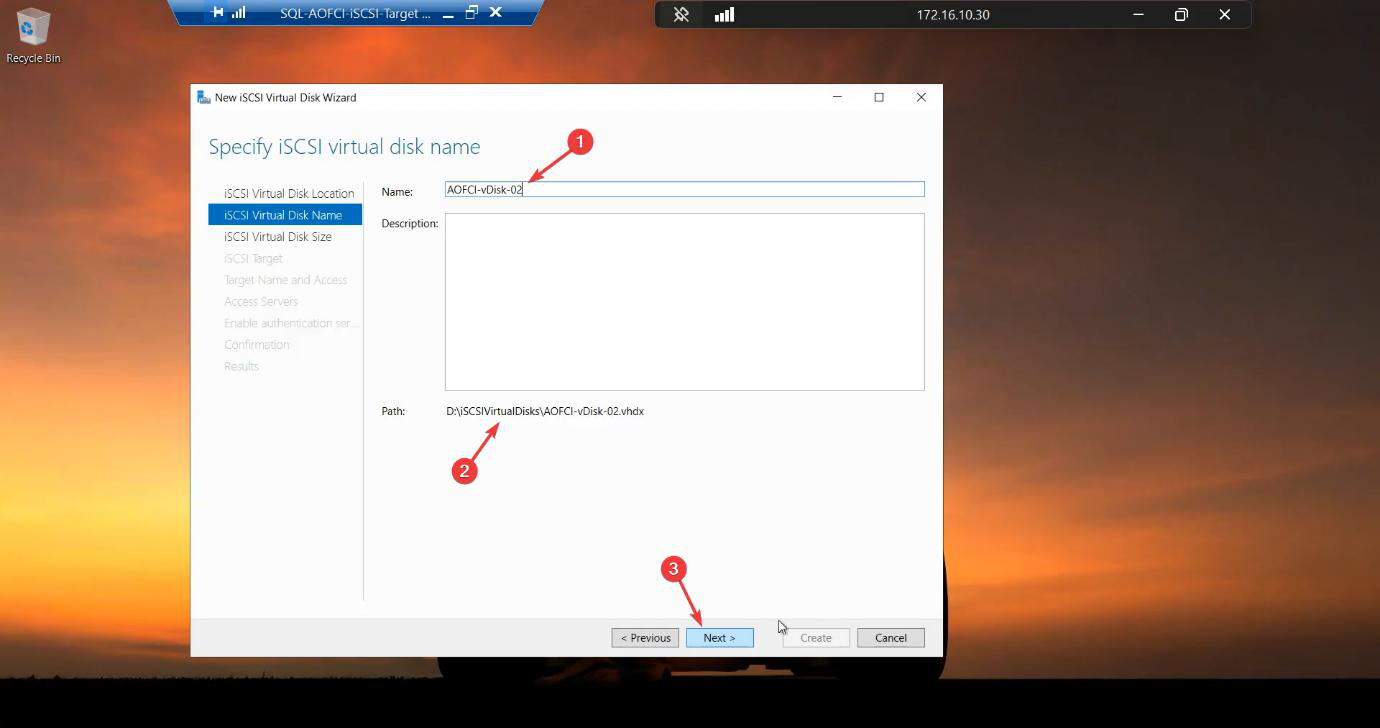

Quorum-Witness. The cluster tie-breaker LUN.Name: Quorum-Witness.

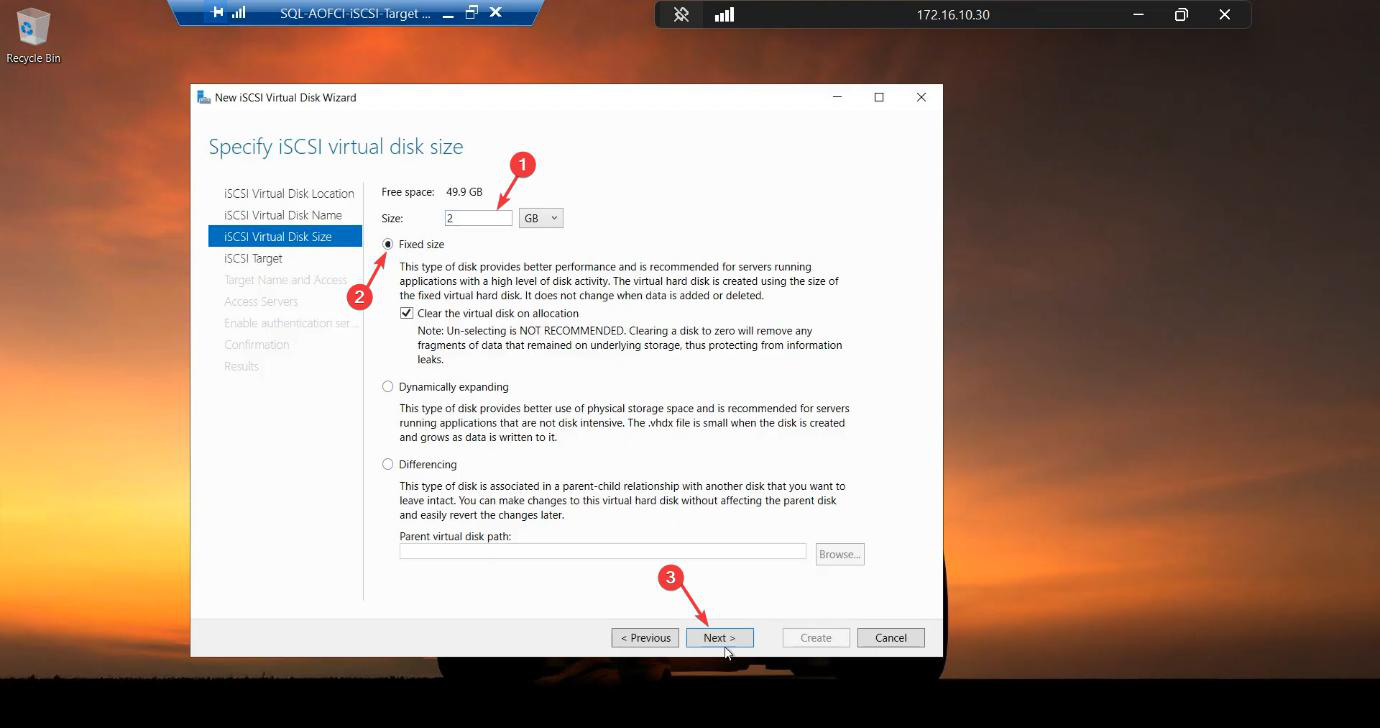

Size: 2 GB Fixed. Tiny. The witness stores cluster metadata only, no data.

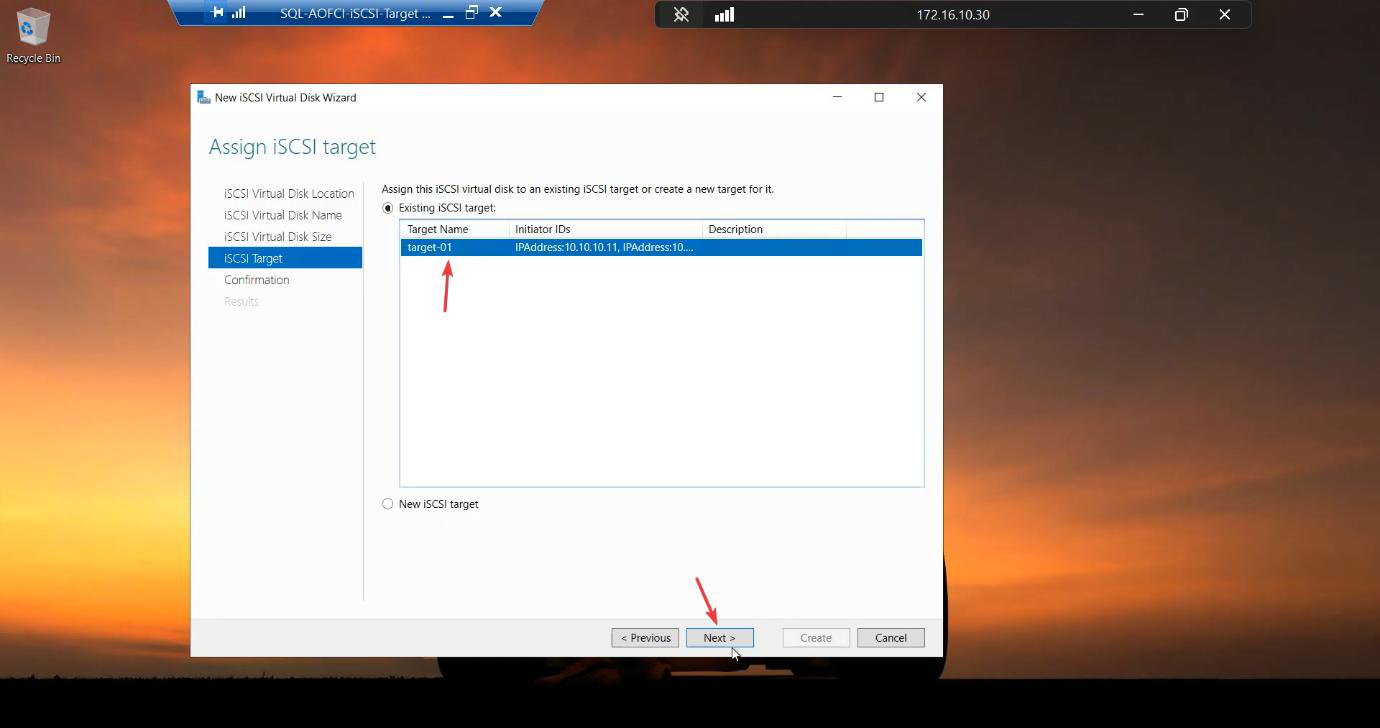

Target-01. Critical: do NOT create a new target. Both disks must be on the same target so they present together.Critical: on the iSCSI Target step, select Existing iSCSI Target → Target-01. Do NOT create a new target. Both disks must present together to the same initiators — otherwise the cluster sees them as separate storage groups and configuration gets messy.

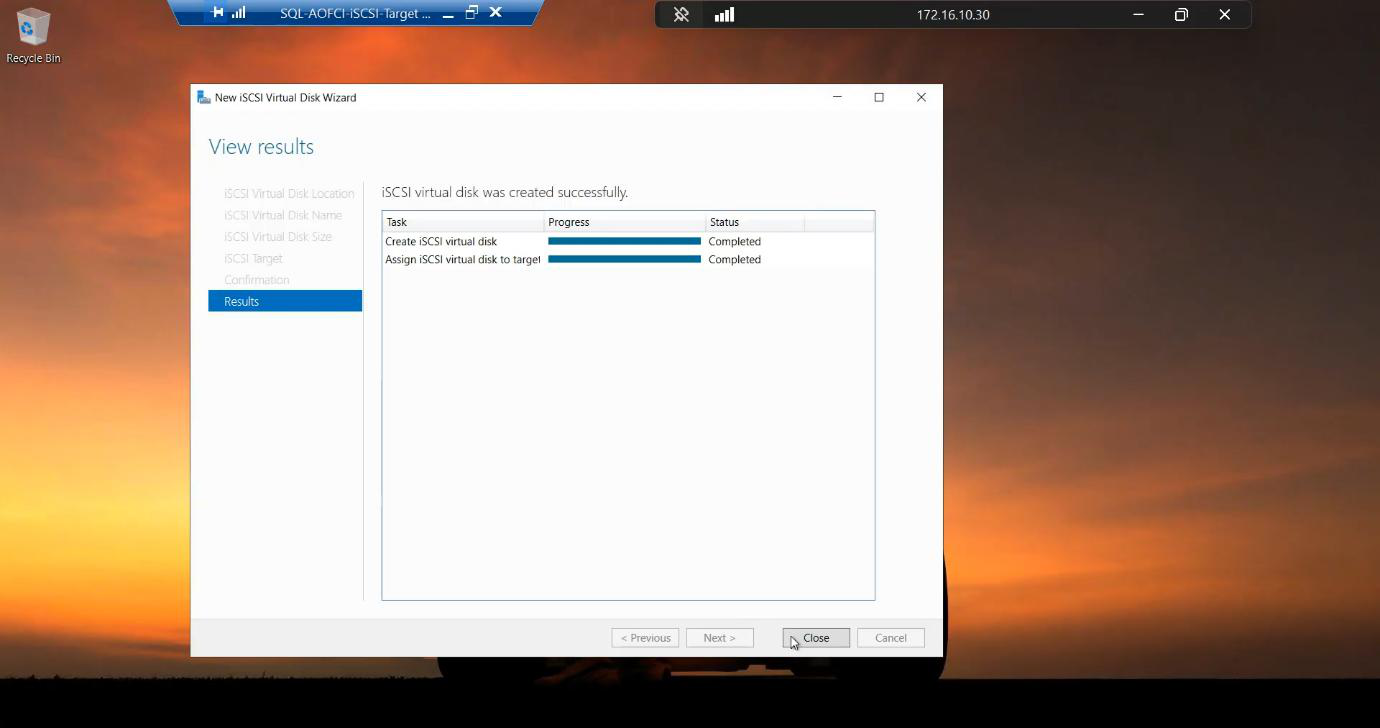

Created.

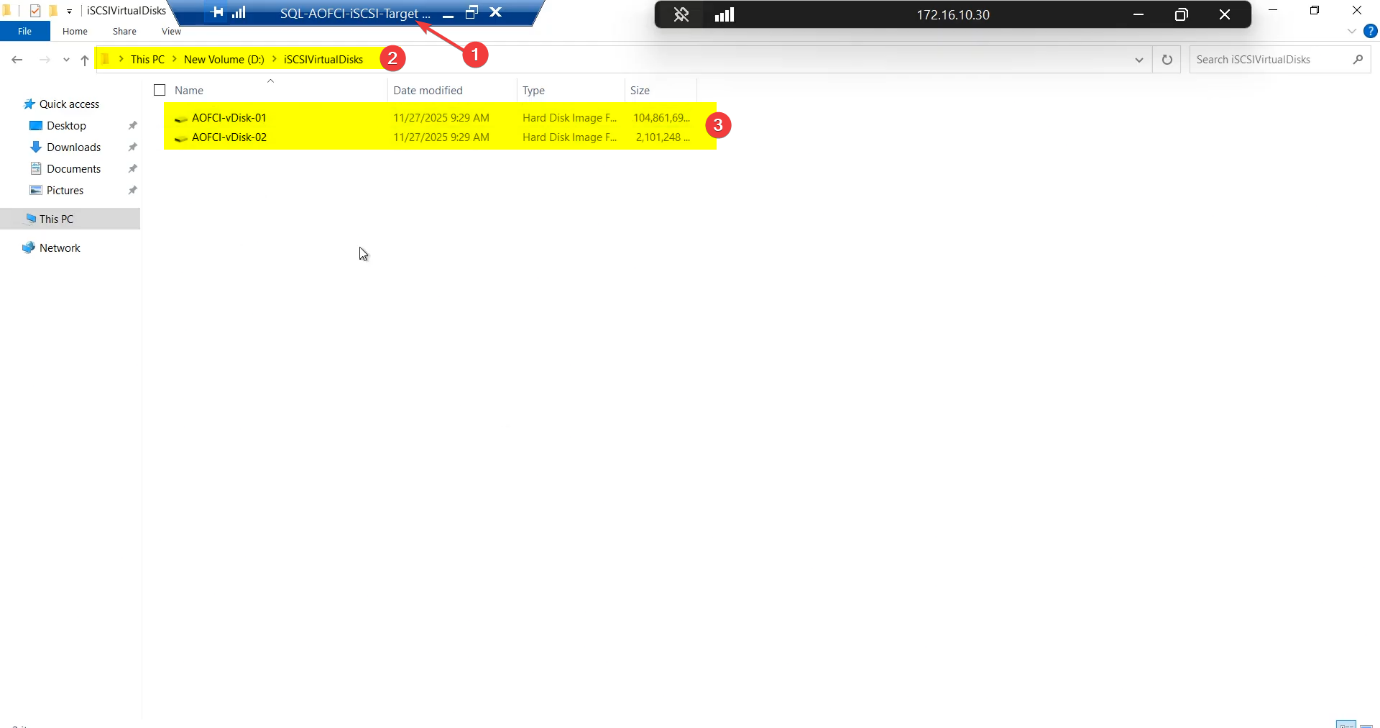

Step 5 — verify both disks exist

D:\iSCSIVirtualDisks\SQL-Data.vhdx and Quorum-Witness.vhdx. SAN side complete.File Explorer > D:\iSCSIVirtualDisks\

SQL-Data.vhdx(~100 GB)Quorum-Witness.vhdx(~2 GB)

Both fixed-size, both on Target-01, both whitelisted to Node-01 and Node-02. SAN side is complete.

Things that bite people in this part

Forgetting the network binding

Easiest mistake. iSCSI listens on all NICs by default. Without the binding step, your storage traffic competes with backup, monitoring, AD replication, etc. on the public NIC. Every weird latency spike traces back to this. Bind to storage NIC only.

Two targets instead of one

If you create Quorum-Witness on a separate target, the cluster sees Data and Quorum as two unrelated storage groups. Cluster validation passes, but configuration is fragile and adding more disks later requires duplicate ACL setup.

Dynamic size in production

Dynamic VHDX files expand on first write. SQL Server doing a heavy write into an unallocated region experiences a multi-second pause while the host expands the file. Lab: dynamic is fine. Production: always Fixed.

CHAP forgotten

Lab: CHAP off is fine. Real environment: enable CHAP with mutual authentication. Without it, anyone on the storage subnet who knows the target IQN can connect.

Disk size sprawl

The 150 GB raw disk holds 100 GB Data + 2 GB Quorum + headroom. Plan ahead — SQL data files want to grow. In production, monitor LUN free space and grow the underlying disk before SQL hits a write failure.

Storage IP changes

If you ever renumber the storage subnet (e.g., 10.10.10.x → 192.168.50.x), the IP-based initiator ACL breaks. Production tip: scope by IQN instead, then nodes can change IPs without storage drama.

What’s next

SAN side built. Part 4 jumps to Node-01 and Node-02 and configures the iSCSI Initiators — discovering the target, logging in, formatting and mounting the LUNs. See the full series at SQL Server Clustering pathway.